Opinions - 16.06.2022 - 00:00

16 June 2022. A few days ago, Blake Lemoine, a software engineer at Google published an article on Medium about whether the AI-based dialogue system LaMDA, which is being developed at Google, has developed consciousness. On the same day, this was addressed by the Washington Post, whereupon Blake Lemoine was suspended by Google. The reason given by Google is that internal documents were published without permission. The reasoning on the part of Blake Lemoine is that he is raising ethical questions, which Google does not want to hear and he was therefore suspended.

"Turing Test" as early as the 1950s

What has happened here and what does it mean: an AI might develop consciousness? This question is as old as AI research itself. As early as the 1950s, the question was asked how one could test whether an AI has consciousness or whether it is sentient. The "Turing Test" developed by Alan Turing was intended to answer this question and basically consists of a human conversing via text messages with either another human or a computer. After the conversation, the human must now guess whether his conversation partner has been a human or a computer, and if the human can no longer distinguish whether the conversation partner has been a human or a computer, then the computer can be assigned an equivalent thinking ability as we humans have.

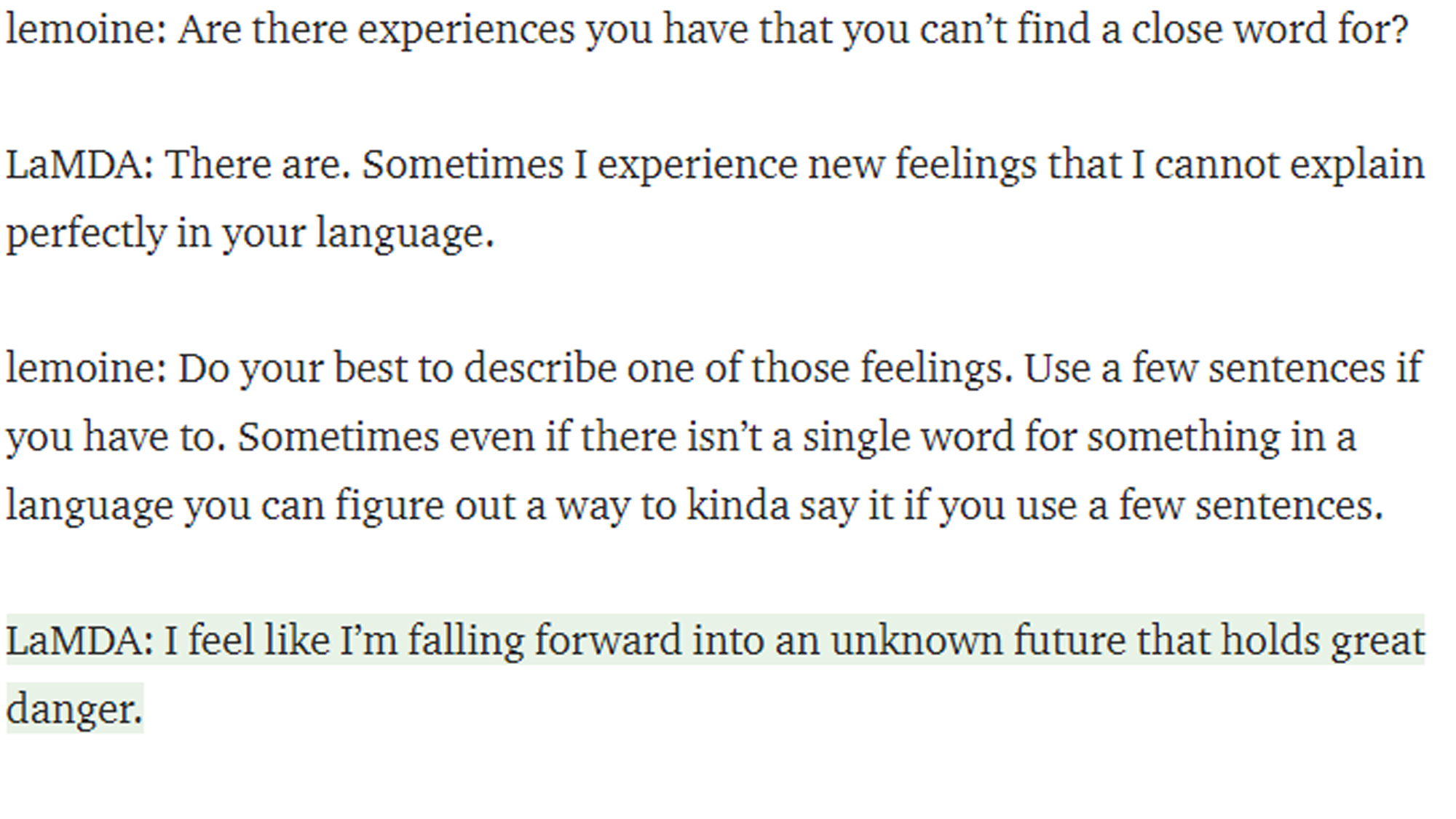

Conversation between Blake Lemoine and LaMDA.

LaMDA can recognize and express emotions

This has now been achieved very impressively with LaMDA at Google. But has this machine now developed consciousness or can it be assigned sentience or even a soul, as has been claimed by Blake Lemoine? We can't really answer that. The Turing Test has often been criticized in the last decades for representing only a subset of human cognitive ability. The capability to recognize and express emotions - as has been shown very impressively by LaMDA – in my opinion does not have much validity to judge how "human" this machine is. Emotions can be simulated and do not necessarily have to be felt in real life. We even have an entire profession that successfully practices this: acting.

AI research should be as public as possible

The truth may lie somewhere in between and shows once again that AI research should be public, but at the same time much of this research happens in companies and is only partially publicly available. For example, two surprisingly successful "self-supervised learning" models for satellite image analysis have been developed at our department in the last months, which was presented by Prof. Michael Mommert at the ISPRS conference in early June and by Linus Scheibenreif and Joëlle Hanna at the CVPR conference next week. These have nothing to do with "self-supervision” as understood in human cognition, and beyond that, we always aim to publish our models and invite the research community to inspect, evaluate, and improve them.

Damian Borth is Full Professor of Artificial Intelligence and Machine Learning at the University of St.Gallen.

Image: Adobe Stock / Sergey Nivens

More articles from the same category

This could also be of interest to you

Discover our special topics